Why estimates fail, and what replaces them

Your team estimates in averages. But averages hide the range, and the range is what kills your deadline. The audit replaces single-point estimates with a probability based on how your team actually delivers. Not how they plan to.

What you get that you can't get from a dashboard

Most tools track flow metrics, cycle time, throughput, WIP. They're useful for the engineering team. But they don't answer the question your board, your client, or your founder is actually asking: are we going to hit the date we promised?

The audit takes your actual commitments, the ones you've already made, and attaches a probability to each one. Not a traffic-light status. Not an average. A specific number based on 10,000 simulated outcomes.

The output isn't a dashboard your team logs into. It's a specific probability attached to a specific promise, and a conversation about what to do with that number.

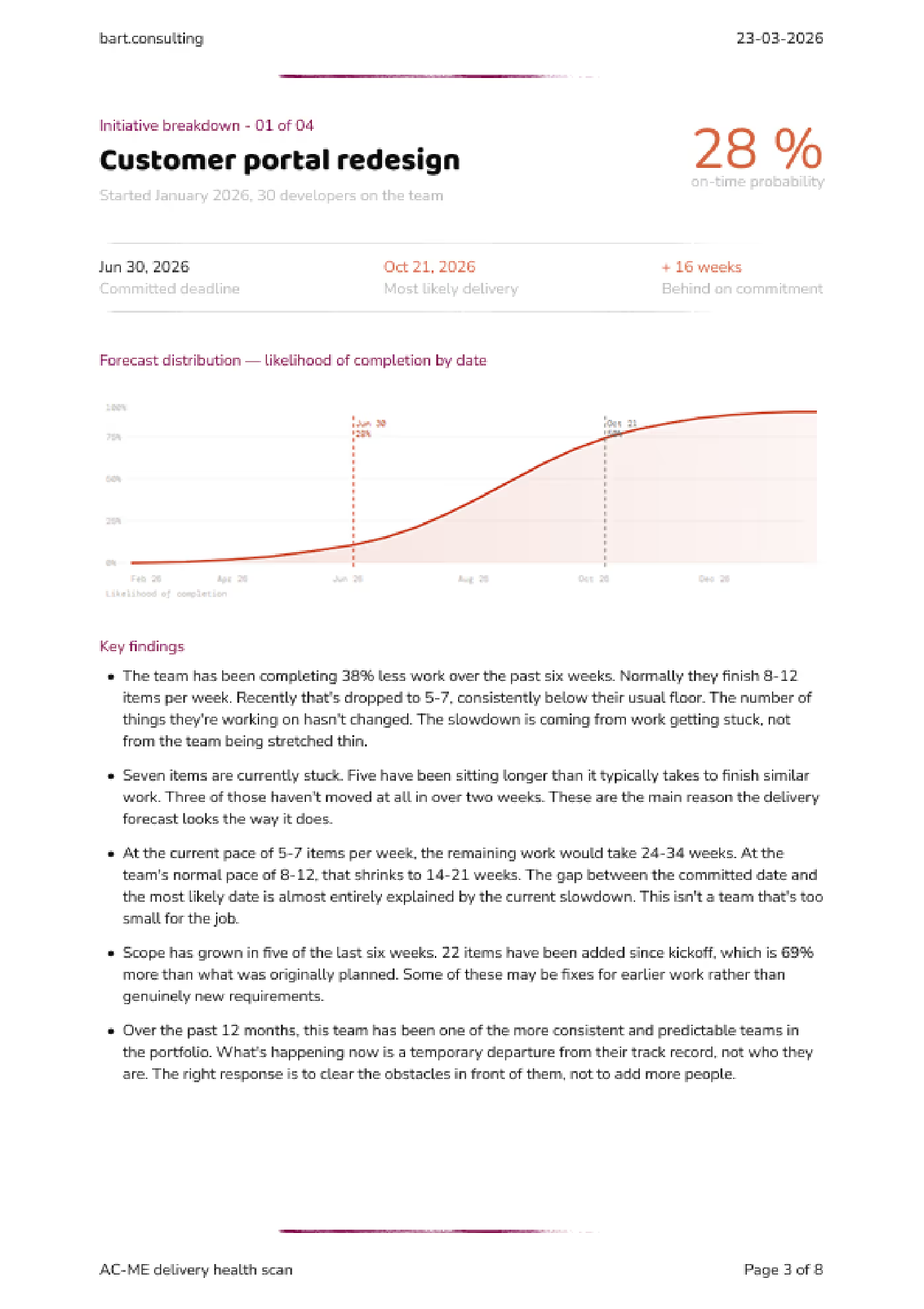

You committed to delivery by September 2. Based on your team's actual delivery patterns, there is a 28% chance you'll hit that date.

Most likely outcome: October 21 (± 10 days), roughly 16 weeks later than planned.

The gap is explained by: 7 items that have been in progress for over 3 weeks, and 69% scope growth since the initial commitment.

This is where most teams realise: the plan didn't fail, it was never real to begin with.

Click the images to view in high resolution

Download the full example report (PDF)

Why not just use ActionableAgile, LinearB, or Jellyfish?

You can. They're good at what they do: giving engineering teams dashboards to track their own flow. That's a different problem.

Those tools tell you where you are. The audit tells you where you're going to end up.

Most people who come to me already have metrics somewhere. What they don't have is someone willing to say "this has a 22% chance of landing" to the person who needs to hear it.

How the simulation works

Step one: I map how your team actually works

Every team uses their tools differently. "In Progress" means something different in your team than it does in mine. Before I touch a number, I build a clean model of how work actually moves through your pipeline, what the stages mean, where handoffs happen, where things stall.

If your Jira is a mess, that's expected. The mess isn't a blocker, it's where I start.

Step two: I map what you've promised versus what's left

Every open commitment, every piece of remaining work, and, this is usually the uncomfortable part, everything that got quietly added after you agreed on the scope.

Step three: I run 10,000 simulations

I take your team's actual completion times, the full range, not an average, and use that to project forward. The fast weeks and the slow weeks. Each simulation picks different completion times from that range and plays the remaining work forward.

Some runs finish early. Some finish late. The pattern across all 10,000 runs tells you something a gut estimate never can: how likely you are to land on the date you promised.

The output isn't a single date. It's a probability curve. A narrow curve means a predictable team. A wide curve means high variability in outcomes. Both are useful to know.

What the simulation captures, and what it honestly can't.

The simulation uses the full range of delivery speeds your team has actually experienced. That includes the weeks where reviews dragged, where someone was out, where a dependency didn't land. It captures the patterns your team already lives with.

What it doesn't do is predict things that have never happened. If your lead architect gets poached next Tuesday, no model saw that coming. But that's not what catches most teams. Most teams get caught by the slow, ordinary accumulation of normal variation, the kind everyone dismisses as "just one of those weeks" until there have been eight of them.

What teams do with this

The audit is not the end. It's the moment things become clear enough to act.

- Renegotiate deadlines before they become broken promises

- Cut or reshape scope based on real capacity

- Identify where work is getting stuck and why

- Decide whether to add capacity, or stop overloading the team

You don't need more reporting. You need to know what to change while there is still time to change it.

What data I need (and what I never see)

I built an open-source exporter for Jira Cloud. You can read every line of it. It pulls one thing: when work moved from one status to another. That's it.

No ticket descriptions. No comments. No attachments. No code. No client names.

You install it, you run it, you review the output before you send anything. Nothing leaves your environment without you choosing to share it.

If your team uses Azure DevOps, I can set up a comparable export, same principle, same boundaries.

If your security or legal team wants to review the process first, I'll walk them through it. It usually takes ten minutes.

Common questions

What if our Jira is a mess? +

That's expected. I don't take your tracker's data at face value. I build a clean model of how work actually flows before I run any numbers. The mess isn't a blocker, it's where I start.

Can we run this ourselves? +

The data extraction, yes. The exporter is open source. But the audit isn't just software, it's the workflow mapping, the commitment modelling, and the conversation about what to do with the number. That's the part that makes it useful.

Find out which deadline is already slipping

The audit is included in the Delivery Realign. Three audit cycles across eight weeks to measure where you start, track progress, and prove what changed.

See how the Delivery Realign works